What’s new today

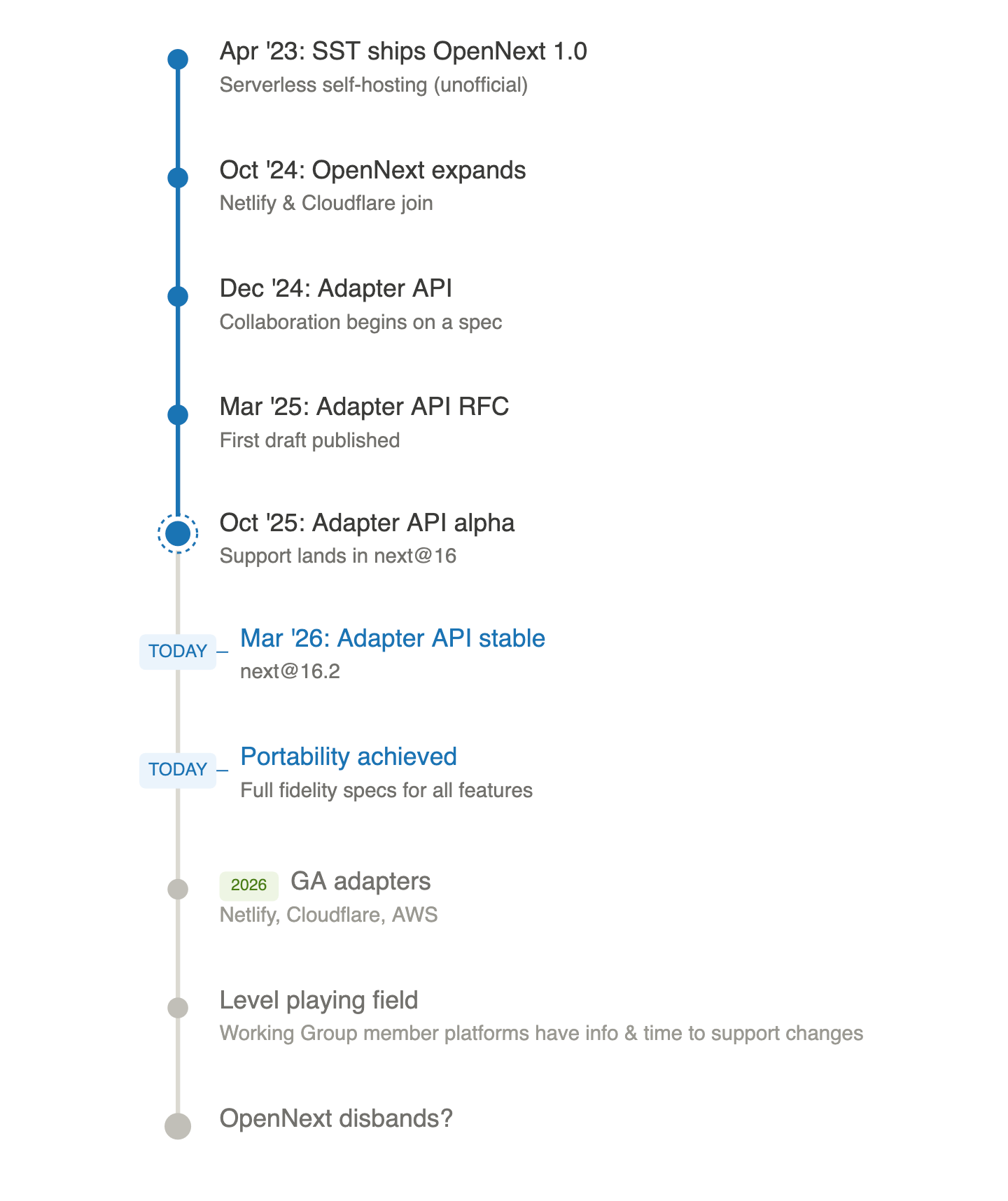

18 months ago, in coordination with Cloudflare, we joined OpenNext:

The goal of the OpenNext project is to make sure you can deploy Next.js sites to any cloud platform […]. We […] will start collaborating across providers, such as Cloudflare and SST, to make sure Next.js runs well everywhere.

Today, after a year of collaboration with Netlify, OpenNext, Cloudflare, Google, AWS Amplify, and other industry partners, the Next.js team is announcing several important milestones:

- The Deployment Adapter API is now stable, and the stable Vercel adapter is now being used on that platform.

- The full suite of 9000+ end-to-end Next.js tests has been made available as a formal contract for third-party platform testing. Conforming adapters will be documented as first-class deployment options on equal footing with Vercel.

- We are establishing a new, permanent Next.js Ecosystem Working Group, with established governance, public meeting notes, and an open invitation to industry partners.

- The Next.js team has made formal commitments to provide advance notice of changes to the framework, coordinate compatibility testing, thoroughly document capabilities, and consider partners’ feedback and change proposals.

These are remarkable milestones that will lead to even stronger Next.js capabilities on Netlify and across the web. Read on for technical insights into our roadmap of challenges ahead or skip ahead to what’s next.

Next.js challenges one year later

A year ago, we wrote about how we run Next.js on Netlify, calling out six major categories of challenges providers like us must contend with. Let’s check in.

Challenge: No adapter support

A year ago, we had called out that a major challenge was the Next.js framework’s lack of any formal deployment adapter concept.

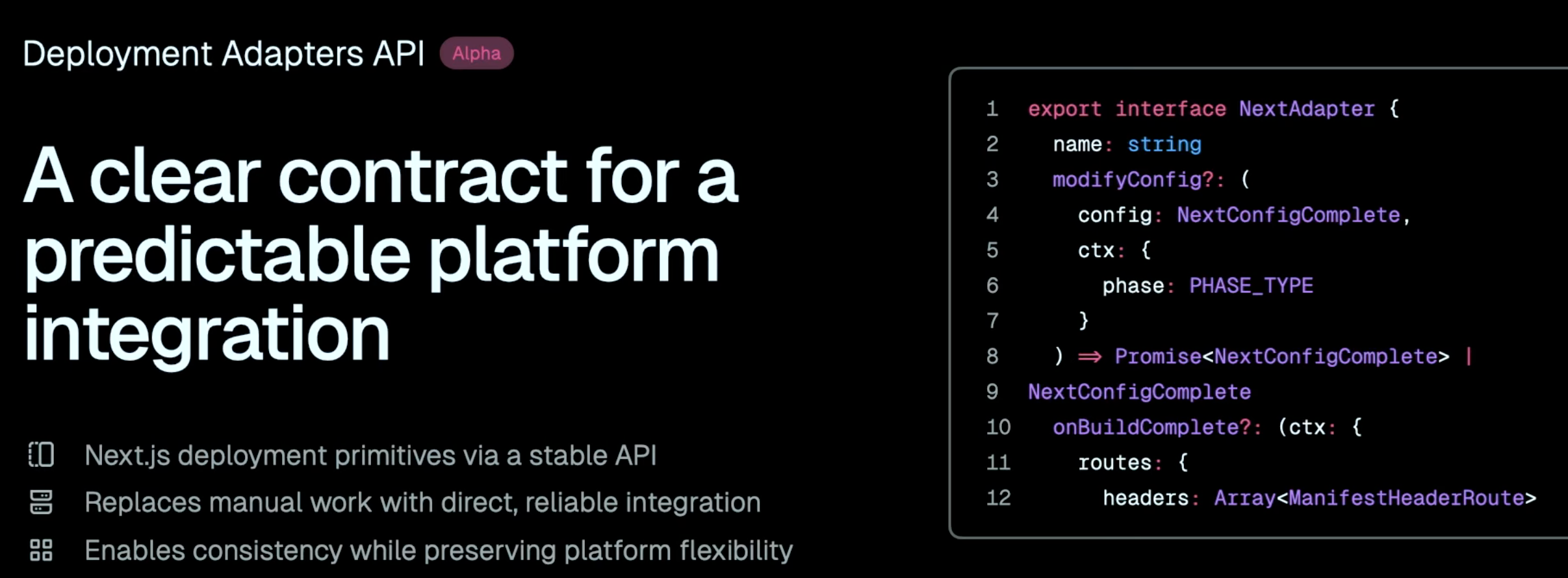

Just one week later, the Next.js team published a first public draft of an RFC for a Deployment Adapter API.

Over the course of the following months, we actively engaged with the Next.js team, OpenNext members Cloudflare and SST, eventually expanding to a formal Working Group along with Google/Firebase, AWS Amplify, and others. We shared feedback and iterated on Discord, on GitHub, and in bi-weekly calls.

Source: Next.js Conf 2025

By October, Next.js 16 launched with an alpha implementation of the Deployment Adapter API. Internally, Vercel had even been dogfooding this with an experimental Vercel adapter.

Since then, we’ve all been hard at work collaboratively developing their respective adapters from the ground up.

Today, the Deployment Adapter API was marked stable in Next.js 16.2. Vercel’s adapter has been published and already powers builds for some projects on their platform. Until now, Next.js builds on Vercel had been powered by undocumented code paths in Next.js and closed source on Vercel. These are huge milestones that cannot be understated.

Cloudflare’s and Netlify’s adapters are in active development. Netlify’s adapter will be published and rolled out automatically to projects on compatible versions later this year, as soon as it is complete, stable, reliable, secure, and performant.

This has been a highly collaborative effort across competitive divides and a great accomplishment for the open web. We commend Vercel, the Next.js team, Jimmy Lai, and all our Working Group partners for seeing this through.

Challenge: Undocumented behaviors & lack of open standards

A year ago, we called out the challenges of undocumented complexities, many of which arise from its multiple layered caching mechanisms with implicit functional and nonfunctional requirements to be deployed and operated at scale, often with nonstandard infrastructural needs.

Since then, the Next.js team has:

- overhauled the docs to remove references to Vercel

- created project templates for many platforms

- opened up its full end-to-end test suite, removing Vercel-specific assertions and assumptions, and documented how others can leverage the suite to test their own deployment adapters

These are excellent developments that we welcome wholeheartedly.

Many undocumented behaviors were hidden behind a private minimalMode flag intended for Vercel deployments. As noted above, Vercel has begun the rollout of their own open-source adapter, eliminating the need for minimal mode. As such, we expect to see these code paths deleted in the next major release, another significant move forward.

Until recently, a core challenge remained: the architectural and infrastructural requirements needed to support the full breadth of Next.js as intended was neither self-evident nor described explicitly in its documentation, and this is all the more important when these are unique in the industry. As of this week, we are proud to announce that nearly all Next.js capabilities are now sufficiently documented such that full fidelity can be achieved on any platform that wishes to do so. The Next.js team, in collaboration with Netlify and other partners, has clearly specified and delineated “functional fidelity” and “performance fidelity” for each capability, and, when novel infrastructural requirements are needed, documented these appropriately. This is a major win for self-hosters and for the open web.

Challenges: Roadmap & release visibility

Last year, we noted the lack of roadmap transparency as well as the unpredictability of Next.js releases as challenging for providers, partners, and end-user developers. Other frameworks keep the public apprised to some extent and encourage participation, while keeping a small circle of ecosystem partners even closer to the action.

Since then, we’ve seen much better transparency:

- Plans for Next.js 16 were shared publicly three months in advance.

- The connections created by the formation of the Deployment Adapter API Working Group led to lines of communication that were regularly used to share upcoming changes and draft release notes and circulate RFCs privately.

- A security group was established to coordinate early warning and mitigations for upcoming CVEs.

Today, the Next.js team announced that the Deployment Adapter Working Group will become the Next.js Ecosystem Working Group, which will be open to new members and will post meeting summaries publicly. This increased transparency is a win for the community.

Among future ideas, transparency could be improved further with a living public roadmap, an active, structured RFC process, and recurring open community calls.

Beyond adapters: what we’d like to tackle next

Let’s dive into specific, concrete technical challenges that the Deployment Adapter API has not solved. Each of these is an example of multiple compounded challenges: an (until recently!) under-documented requirement, not built on the open web platform, with complex, nuanced architectural and infrastructural constraints that are unique to Next.js.

In the next year, we’d love to work with the Next.js team and any willing partners on addressing these challenges. We share these here in a spirit of constructive collaboration and transparency.

Fair warning: this section gets very technical. Feel free to skip ahead.

PPR requires bespoke CDN infra

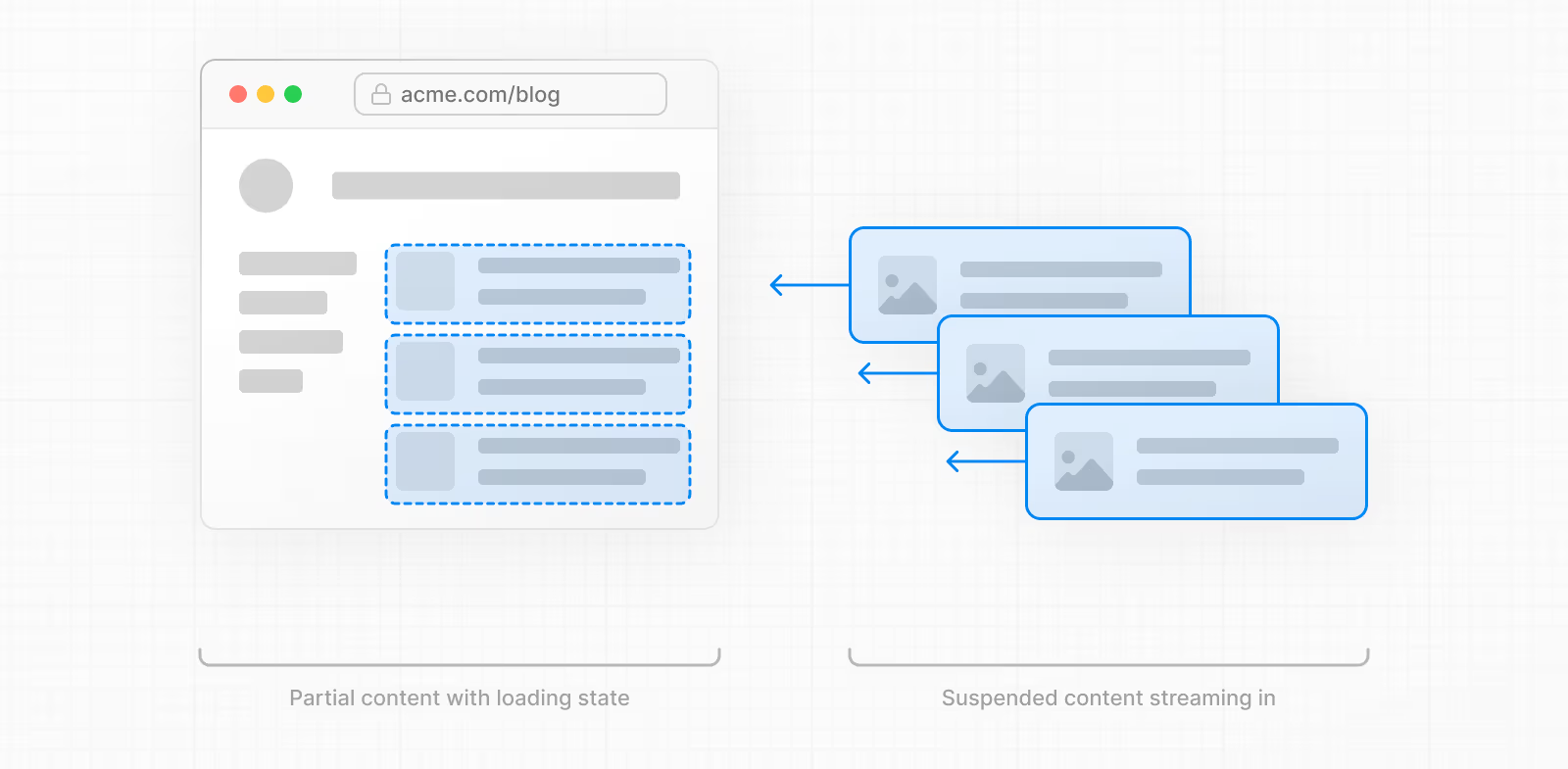

Partial Prerendering (PPR) is a hybrid rendering strategy unique to Next.js.

Source: https://nextjs.org/docs/app/getting-started/cache-components

It involves prerendering a “static shell” HTML page at build time, but serving it in a single response stream, which then immediately starts appending a dynamic “resumed” response stream. This dynamic segment contains <script> tags (that get streamed in after the closing </html> tag from the static shell) that swap the dynamic “suspended” content onto the page and into the React component tree as it streams in. The static shell can also be revalidated, at which point only the updated shell — which cannot be used as a full response — must be cached.

It’s a very sophisticated trick to achieve a balance of fast initial response and dynamic components.

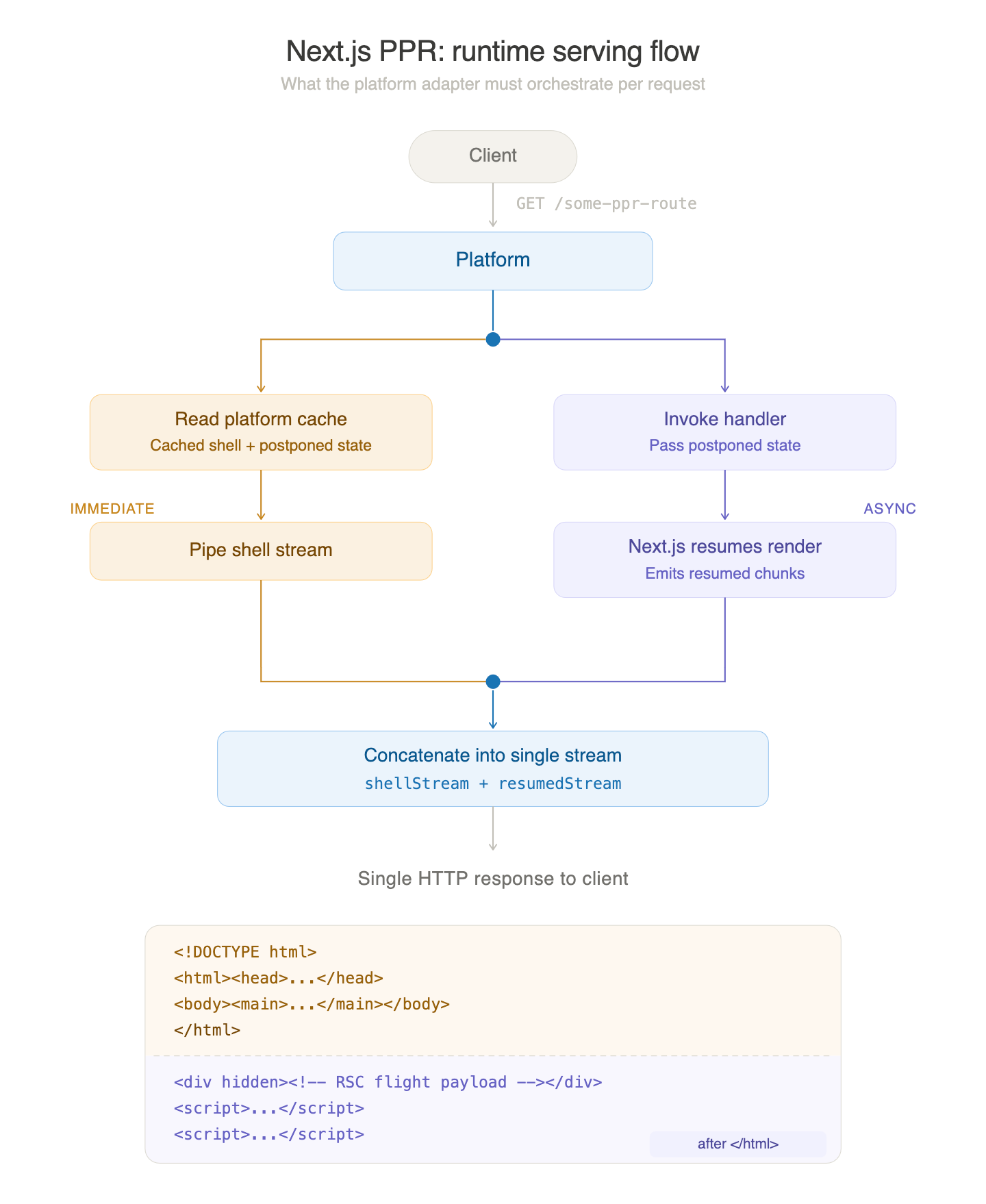

What isn’t obvious is that many of the usual expectations for web architecture go out the window for the promise of PPR to truly be realized (and to match the experience on Vercel).

The “static shell” is clearly intended to be served immediately from a CDN, the very purpose of PPR being to achieve very low Time To First Byte (TTFB) and First Contentful Paint (FCP) despite rendering some dynamic content.

On the other hand, the dynamic response stream must be generated at request time by some form of compute invoking the Next.js renderer.

With PPR, these two responses must be stitched as a single HTTP stream, transparently from the client’s perspective. In addition, the dynamic render is intended to be fired off in parallel and only appended to the static shell response stream serially (the stream is ”resumed”). Note also that the full response cannot be cached as it contains the dynamically stitched part appended to the (nominally cacheable) static HTML document.

As far as we know, no CDN other than Vercel’s has this capability.

As far as we know, no other framework has any such requirement. The closest framework feature is Astro Server Islands, which simply includes a client-side script in the static shell that fetches the dynamic response from the browser.

Until recently, none of this was mentioned in the PPR, deploying, or self-hosting documentation.

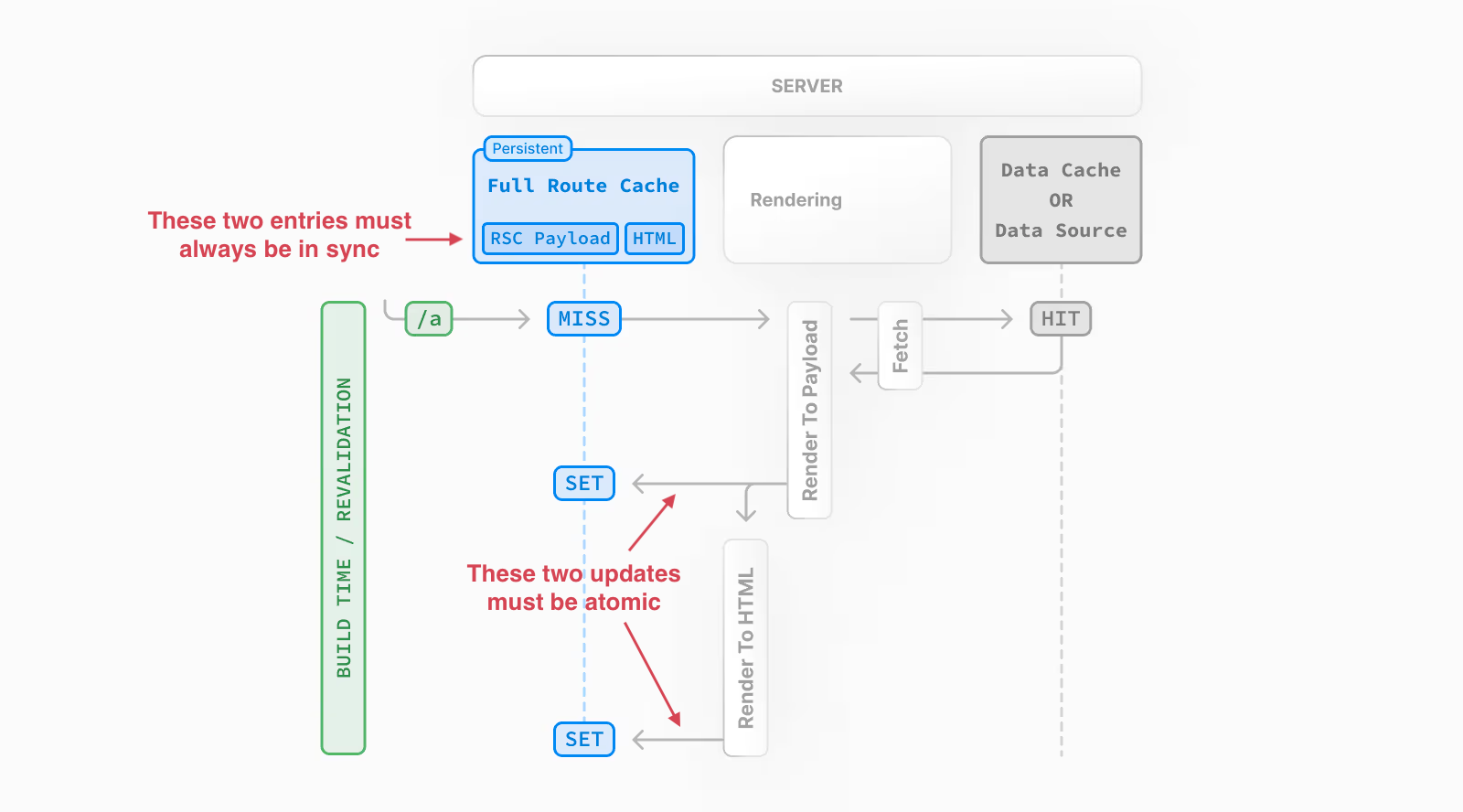

Cache revalidation requires bespoke CDN infra

A complete, fully compliant implementation of Next.js cache revalidation requires atomic revalidation of groups of coupled response cache entries. In Pages Router, this is a given path’s HTML response and JSON payload. In App Router, the simplest case is a given path’s HTML response and RSC payload (together known as the Full Route Cache).

Apps misbehave if this is not ensured.

Source: https://nextjs.org/docs/15/app/guides/caching#full-route-cache (annotations in red are added)

This requirement is surprising to anyone familiar with standard HTTP caching, which Next.js otherwise leans into for response caching. There is no mechanism in the web platform to mark an HTTP response as cacheable in a public cache with the requirement that a second response, occurring out of band, must always be cached transactionally with the former.

Serving two distinct but coupled cache entries behind a traditional CDN introduced complexity, risking inconsistent responses for the same underlying page. The AWS adapter had to employ some clever workarounds to ensure reliable fidelity.

— Nicolas Dorseuil (OpenNext AWS)

As far as we know, no CDN other than Vercel’s has this capability.

As far as we know, no other framework has any such requirement.

Until recently, none of this was mentioned in the caching, deploying, or self-hosting documentation.

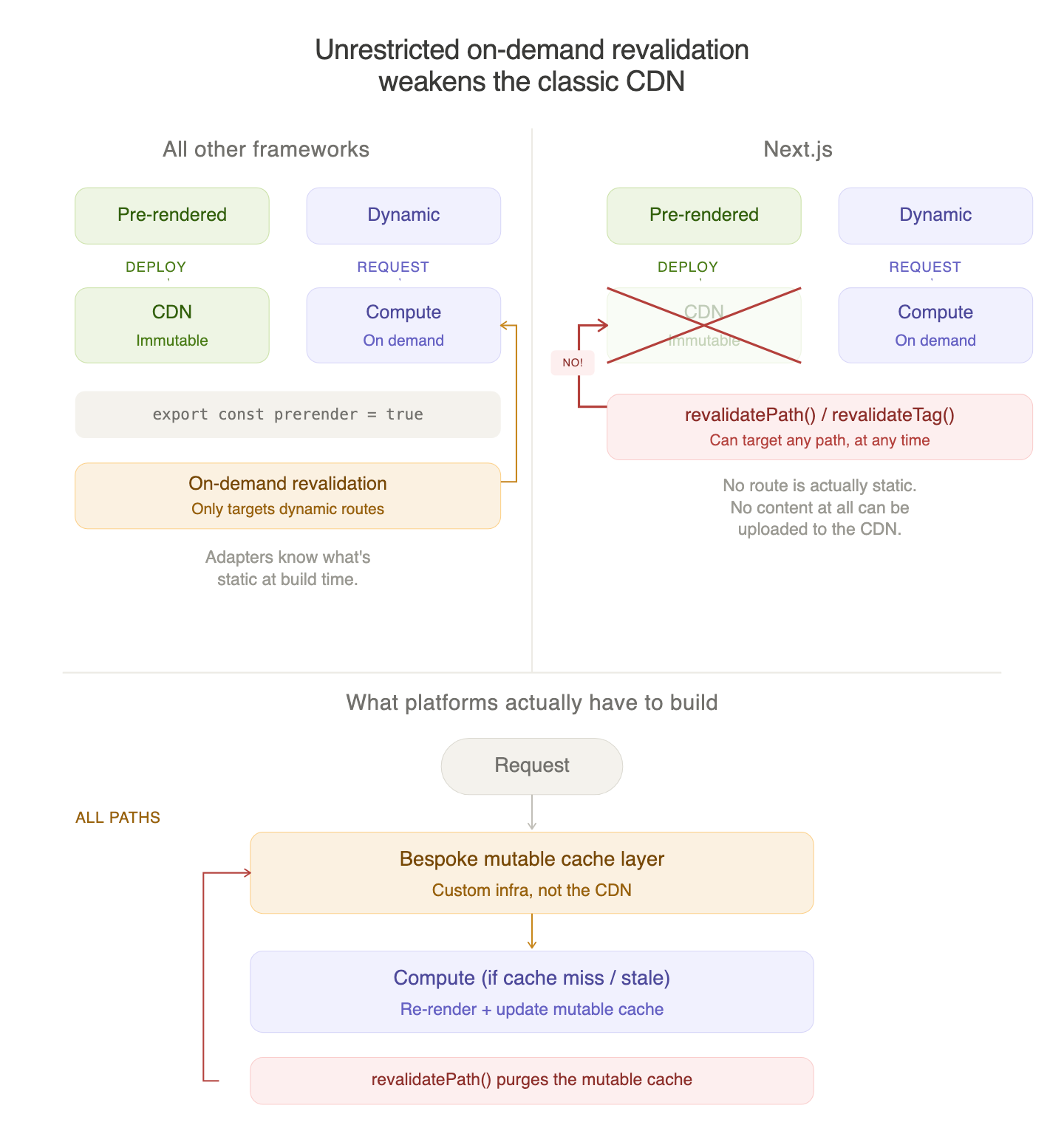

On-demand revalidation requires bespoke CDN infra

On-demand revalidation in Next.js is a mechanism whereby an app can call revalidatePath or revalidateTag in order to revalidate cached responses at any time, typically from a webhook.

Next.js places no restriction on which paths can be revalidated on demand. Even if your site does not use on-demand revalidation at all, we must treat it the same way as any other, because the framework does not track this information.

The atomic and immutable deploy model, where assets deployed to a CDN are immutable within a deploy, is fundamentally incompatible with Next.js’s implementation of on-demand revalidation. Every platform that adopted this model in the last decade (Netlify, Vercel, Cloudflare Pages, AWS Amplify, Firebase App Hosting, Azure Static Web Apps…) has had to work around this (or not support it) by introducing some form of custom, mutable, low-latency cache layer that must be exercised on all paths, and by not actually uploading any content that was pre-rendered at build time to the CDN.

The Cloudflare Pages adapter was ultimately killed off because it was fundamentally incompatible with the Next.js revalidation model. It was incapable of supporting ISR due to its reliance on Vercel’s Edge Runtime with Next.js, where the framework assumed that you only did SSR at the edge. We had to join OpenNext and re-build an adapter on top of their well-established architecture and caching layer in order to support ISR on Workers and bring it to the edge.

— James Anderson (OpenNext Cloudflare)

In other words, because Next.js has no concept of a truly static route, few web deployment platforms can safely serve pre-rendered Next.js output as CDN assets; instead, requests to all routes must go through an additional cache layer because any page could be revalidated at any time.

As far as we know, no other framework behaves this way. Others all provide an explicit prerender opt-in that adapters can rely on as an immutability guarantee, such as Astro’s prerender = true export, SvelteKit’s prerender = true export, and Nuxt’s prerender: true option under routeRules configuration—all of which are distinct from ISR mode.

Until recently, none of this was mentioned in the caching, deploying, or self-hosting documentation.

Finish line in sight

Historically, Next.js has:

- chosen constraints that expect platform primitives that are neither standard (e.g., part of HTTP, the Web Platform, WinterTC server runtime standards, and so on) nor conventional;

- developed these capabilities on the Vercel platform in tandem;

- released such changes without advance notice to other platforms;

- not appropriately documented these constraints and functional requirements.

We are not claiming that these choices were made intentionally or with ill intent. Each framework chooses different sets of trade-offs. The Next.js team chooses to allow itself to push well beyond the current capabilities of the Web platform in order to iterate quickly on new capabilities. This occasionally results in the first two of the above outcomes, understandably. But historically this has also come with the latter two avoidable outcomes, to the detriment of the Next.js ecosystem and the open web.

Until now.

We are happy to share that the Next.js team has made the following commitments.

First, the team commits to adequately document all Next.js capabilities such that full fidelity can be achieved on any platform that wishes to do so. “Functional fidelity” will be specified, documenting the behavioural requirements of a feature, as will “performance fidelity”, documenting the intended performance characteristics. In both cases, unusual infrastructural requirements to achieve such fidelity will be documented and a reference implementation will be provided or described.

In fact, we are happy to share that as of this week, in collaboration with Netlify, Cloudflare, OpenNext, and Google, the Next.js team has updated the Next.js documentation to meet these criteria for all current capabilities in Next.js 16.

Second, the team commits to giving sufficient advance notice to deployment platforms (via the Ecosystem Working Group) of upcoming changes with infrastructural requirements, such that these can plausibly be planned and executed before stabilization of the change in Next.js.

With all these improvements in place, we can now see the finish line on the horizon.

The last mile

The Deployment Adapter API is a great leap forward. We want to be clear about that. The working group process has been very constructive, the API is well designed, and we’re genuinely optimistic about what it enables. We commend everyone involved for the time and effort they’ve put in and for seeing this through to GA.

But as our friends at Google said today, the adapter API is an important milestone, not the finish line:

Getting Next.js and all its bells and whistles—middleware, advanced rewrites/redirects/headers, ISR, and now cache components—working behind a traditional CDN has been next to impossible. Throw in multi-tenancy, that we’re building on top of undocumented internal APIs, and are at the very bleeding edge of what’s just becoming possible in standards based tooling like WASM… let’s just say the now stable Deployment Adapters API is helping us sleep better at night. We look forward to continued collaboration in the new Next.js Ecosystem Working Group as we solve these challenges together.

— James Daniels (Developer Relations Engineer, Google Cloud)

We’ve detailed concrete, meaningful challenges that remain: PPR’s implicit, unique, highly unusual CDN requirements; the undocumented atomicity constraints of cache revalidation, intractable without complex bespoke CDN infrastructure; and the inability to lean on the power of the CDN to serve static assets due to a unique limitation of on-demand revalidation.

These are fundamental architectural challenges that make Next.js highly challenging to deploy everywhere with full fidelity. Until just last week, these were not sufficiently documented to allow for platforms to achieve full feature fidelity.

We’ll continue working with the Next.js team, with our fellow OpenNext members (SST, Cloudflare, and independent OSS contributors), Google, the new Ecosystem Working Group, and with the broader ecosystem to tackle these remaining challenges. Now that the documentation piece is addressed, we’ll focus on exploring ways to increase portability via changes to the framework itself.

As for us: Netlify’s adapter is in active development and will gladly ship as a verified adapter alongside others later this year, with automatic rollout to projects on compatible Next.js versions. Hundreds of thousands of developers rely on Netlify to build and run their Next.js apps, so we’re treating this with the care it deserves. We’ll start this rollout when we’re confident it’s complete, stable, reliable, secure, and performant. We expect this to be seamless for users (don’t worry, support for the current OpenNext adapter isn’t going anywhere); we’ll share more about what this will unlock as we get closer.

The OpenNext initiative set out years ago to make Next.js work well everywhere. The adapter API is a foundational piece of that puzzle. A few more pieces remain and the gears are already in motion to set these in their place, collaboratively, across competitive divides. Let’s get to work.

Read more

Next.js, OpenNext, Netlify, and Google Firebase have published simultaneous companion posts:

- Read about the stable Adapter API, verified adapters, and the new working group in the Next.js blog post

- Read about OpenNext’s 3-year journey that got us here in the OpenNext blog post

- Read about the Google Cloud perspective in the Firebase blog post