Changelog

-

The Netlify Cache API now has full support for

stale-while-revalidate(SWR). This was a previous limitation of the Cache API that has now been lifted, thanks to a request from a customer.When using

fetchWithCachewith theswroption, background revalidation is handled automatically. If a response is stale but still within the SWR window, it’s served immediately while a fresh response is fetched and cached in the background.import { fetchWithCache, DAY, HOUR } from "@netlify/cache";import type { Config, Context } from "@netlify/functions";export default async (req: Request, context: Context) => {const response = await fetchWithCache("https://example.com/expensive-api", {ttl: 2 * DAY,swr: HOUR,tags: ["product"],});return response;};export const config: Config = {path: "/api/products",};For users who interact directly with

cache.matchandcache.put, a newneedsRevalidationmethod lets you check whether a cached response is stale and trigger background revalidation manually:import { needsRevalidation, cacheHeaders, MINUTE, HOUR } from "@netlify/cache";import type { Config, Context } from "@netlify/functions";const cache = await caches.open("my-cache");export default async (req: Request, context: Context) => {const request = new Request("https://example.com/expensive-api");const cached = await cache.match(request);if (cached) {if (needsRevalidation(cached)) {context.waitUntil(fetch(request).then((fresh) => {const response = new Response(fresh.body, {headers: {...Object.fromEntries(fresh.headers),...cacheHeaders({ ttl: MINUTE, swr: HOUR }),},});return cache.put(request, response);}));}return cached;}const fresh = await fetch(request);const response = new Response(fresh.body, {headers: {...Object.fromEntries(fresh.headers),...cacheHeaders({ ttl: MINUTE, swr: HOUR }),},});context.waitUntil(cache.put(request, response.clone()));return response;};export const config: Config = {path: "/api/data",};Learn more in the Cache API documentation and the caching overview.

-

Google’s Gemini 3.1 Flash Image Preview, also known as Nano Banana 2, is now available through AI Gateway. You can call this image generation model from Netlify Functions without configuring API keys; the AI Gateway provides the connection to Google for you.

Example usage in a Function:

import { GoogleGenAI } from '@google/genai';export default async (request: Request) => {const url = new URL(request.url);const prompt = url.searchParams.get('prompt') || 'two happy bananas';const ai = new GoogleGenAI({});try {const response = await ai.models.generateContent({model: 'gemini-3.1-flash-image-preview',contents: prompt,config: {imageConfig: {aspectRatio: '16:9',imageSize: '1K'}}});let imagePart = null;for (const part of response.candidates[0].content.parts) {if (part.inlineData) {imagePart = part.inlineData;break;}}const bytes = Buffer.from(imagePart.data, 'base64');const mimeType = imagePart.mimeType || 'image/png';return new Response(bytes, {status: 200,headers: {'Content-Type': mimeType,'Cache-Control': 'no-store'}});} catch (err) {return new Response(JSON.stringify({ error: String(err), prompt }), {status: 500,headers: { 'Content-Type': 'application/json' }});}};This model works across any function type and is compatible with other Netlify primitives such as caching and rate limiting, giving you control over request behavior across your site.

Learn more in the AI Gateway documentation.

-

OpenAI’s GPT-5.3-Codex model is now available through Netlify’s AI Gateway with zero configuration required.

Use the OpenAI SDK directly in your Netlify Functions without managing API keys or authentication. The AI Gateway handles everything automatically. Here’s an example using the GPT-5.3-Codex model:

import OpenAI from 'openai';export default async () => {const openai = new OpenAI();const response = await openai.responses.create({model: 'gpt-5.3-codex',input: 'How can AI improve my coding?'});return Response.json(response);};GPT-5.3-Codex is available for all Function types. You get automatic access to Netlify’s caching, rate limiting, and authentication infrastructure.

Learn more in the AI Gateway documentation.

-

Google’s Gemini 3.1 Pro Preview model is now available through Netlify’s AI Gateway with zero configuration required.

Use the Google GenAI SDK directly in your Netlify Functions without managing API keys or authentication. The AI Gateway handles everything automatically. Here’s an example using the Gemini 3.1 Pro Preview model:

import { GoogleGenAI } from '@google/genai';export default async () => {const ai = new GoogleGenAI({});const response = await ai.models.generateContent({model: 'gemini-3.1-pro-preview',contents: 'How can AI improve my workflow?'});return Response.json(response);};Gemini 3.1 Pro Preview is available for all Function types. You get automatic access to Netlify’s caching, rate limiting, and authentication infrastructure.

Learn more in the AI Gateway documentation.

-

Anthropic’s Claude Sonnet 4.6 model is now available through Netlify’s AI Gateway and Agent Runners with zero configuration required.

Use the Anthropic SDK directly in your Netlify Functions without managing API keys or authentication. The AI Gateway handles everything automatically. Here’s an example using the Claude Sonnet 4.6 model:

import Anthropic from '@anthropic-ai/sdk';export default async () => {const anthropic = new Anthropic();const response = await anthropic.messages.create({model: 'claude-sonnet-4-6',max_tokens: 4096,messages: [{role: 'user',content: 'How can AI improve my coding?'}]});return Response.json(response);};Claude Sonnet 4.6 is available for all Function types and Agent Runners. You get automatic access to Netlify’s caching, rate limiting, and authentication infrastructure.

Learn more in the AI Gateway documentation and Agent Runners documentation.

-

You can now sync changes from different agent runs in the Netlify dashboard. This is especially helpful if you use Netlify Drop to publish or update your project and don’t use Git workflows to sychronize versions of your project.

Syncing changes helps you and your whole team build and publish faster.

How it works

For example, let’s say you used Netlify Drop to publish your project without setting up a Git workflow.

Next, you decide to use Agent Runners to add a new landing page.

You also start an agent run to update your project’s footer and publish the new footer.

Your agent run for the new landing page doesn’t include the footer changes yet. To get these updates, start a sync run from the Agent Runners dashboard. This will apply all new updates from the live production version of your project.

Now when you publish your landing page updates, you’ll get the updated footer as well.

Syncing changes with Agent Runners enables smoother shipping with several agent runs and with multiple team members working on the same project.

-

Anthropic’s Claude Opus 4.6 model is now available through Netlify’s AI Gateway and Agent Runners with zero configuration required.

Use the Anthropic SDK directly in your Netlify Functions without managing API keys or authentication. The AI Gateway handles everything automatically. Here’s an example using the Claude Opus 4.6 model:

import Anthropic from '@anthropic-ai/sdk';export default async () => {const anthropic = new Anthropic();const response = await anthropic.messages.create({model: 'claude-opus-4-6',max_tokens: 4096,messages: [{role: 'user',content: 'How can AI improve my coding?'}]});return new Response(JSON.stringify(response), {headers: { 'Content-Type': 'application/json' }});};Claude Opus 4.6 is available for all Function types and Agent Runners. You get automatic access to Netlify’s caching, rate limiting, and authentication infrastructure.

Learn more in the AI Gateway documentation and Agent Runners documentation.

-

Here are some Agent Runners improvements that all people with Credit-based pricing plans can enjoy:

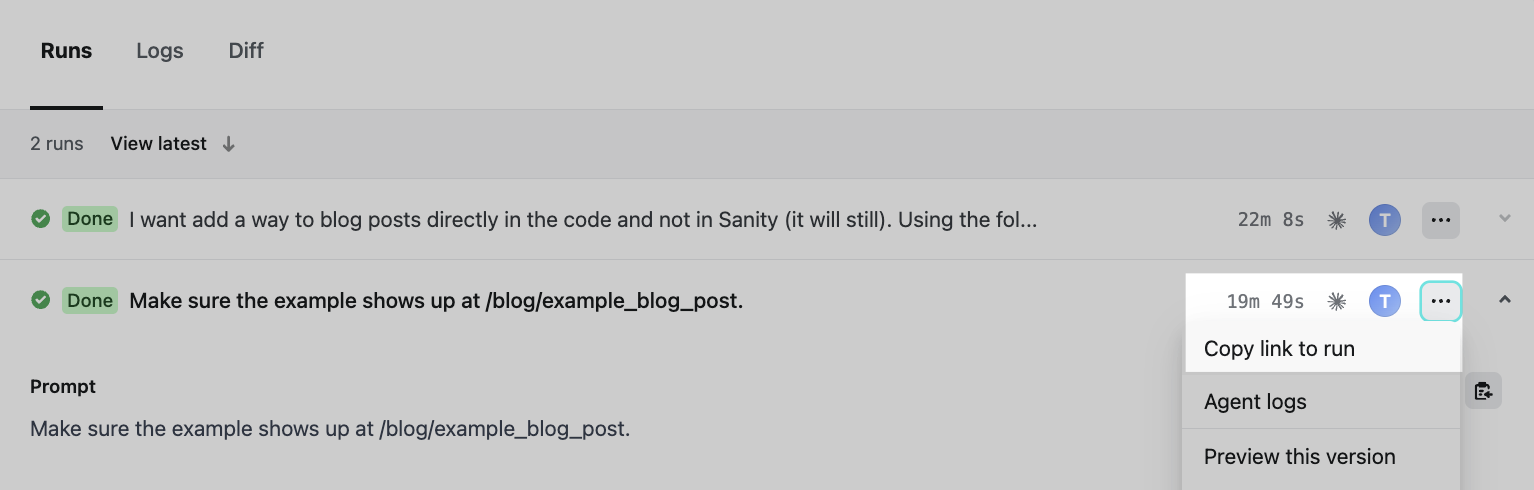

Shareable agent run links

You can now link directly to an agent run to share it with your team or bookmark for later review.

Agent Runners available no matter how you deploy

Agent Runners now supports static projects without build steps. Previously, projects without a build step couldn’t use Agent Runners.

Improved diff view performance

By default, the diff view now loads only the first 50 changed files with an option to load more. This improves performance for large projects.

Feedback welcome

Keep sharing your product feedback about Agent Runners in the feedback form at the bottom of our Agent Runners docs page.

And don’t forget that while you can run multiple agent runs and do other work while they run, you can also play a Netlify game while you wait for the agent to finish.

-

A denial-of-service (DoS) vulnerability (CVE-2026-23864, CVSS 7.5) has been disclosed affecting React Server Components (RSCs), a feature used by Next.js and other React metaframeworks. A malicious payload can cause memory exhaustion or excessive CPU consumption. Next.js has also disclosed two unrelated medium-severity CVEs (CVE-2025-59471, CVE-2025-59472) patched in the same releases. Here’s what Netlify customers need to know.

Impact on Netlify

Nominally, this is a server-side DoS vulnerability. However, on Netlify this has minimal impact: our autoscaling serverless architecture means that a malicious request resulting in a crashed or hung function does not affect other requests. However, active exploitation could increase your function costs.

Affected frameworks

All RSC frameworks are affected:

- Next.js (see version table below)

- React Router 7 (if using RSC preview)

- Waku

@parcel/rsc@vitejs/plugin-rsc

Astro, Gatsby, and Remix are not affected.

React affected versions

See the React blog post for full details.

Affected versions Fixed in 19.0.0–19.0.3 19.0.4 19.1.0–19.1.4 19.1.5 19.2.0–19.2.3 19.2.4 Next.js affected versions

See the Next.js advisory for full details.

Affected versions Fixed in 13.3.0+ EOL - no fix 14.x EOL - no fix 15.0.0–15.0.7 15.0.8 15.1.0–15.1.10 15.1.11 15.2.0–15.2.8 15.2.9 15.3.0–15.3.8 15.3.9 15.4.0–15.4.10 15.4.11 15.5.0–15.5.9 15.5.10 15.x canaries 15.6.0-canary.61 16.0.0–16.0.10 16.0.11 16.1.0–16.1.4 16.1.5 16.x canaries 16.2.0-canary.9 What should I do?

If any of your projects are using an affected version, we recommend upgrading as soon as possible to a patched release.

For Next.js 13.x and 14.x users: patches are not planned for these versions. Consider upgrading to Next.js 15.x or 16.x.

Note that any publicly available deploy previews and branch deploys may remain vulnerable until they are automatically deleted. Consider deleting these deploys manually.

Resources